No real use you say? How would they engineer boats without floats?

Just invert a sink.

Serious answer: Posits seem cool, like they do most of what floats do, but better (in a given amount of space). I think supporting them in hardware would be awesome, but of course there's a chicken and egg problem there with supporting them in programming languages.

Posits aside, that page had one of the best, clearest explanations of how floating point works that I've ever read. The authors of my college textbooks could have learned a thing or two about clarity from this writer.

As a programmer who grew up without a FPU (Archimedes/Acorn), I have never liked float. But I thought this war had been lost a long time ago. Floats are everywhere. I've not done graphics for a bit, but I never saw a graphics card that took any form of fixed point. All geometry you load in is in floats. The shaders all work in floats.

Briefly ARM MCU work was non-float, but loads of those have float support now.

I mean you can tell good low level programmers because of how they feel about floats. But the battle does seam lost. There is lots of bit of technology that has taken turns I don't like. Sometimes the market/bazaar has spoken and it's wrong, but you still have to grudgingly go with it or everything is too difficult.

Floats make a lot of math way simpler, especially for audio, but then you run into the occasional NaN error.

On the PS3 cell processor vector units, any NaN meant zero. Makes life easier if there is errors in the data.

all work in floats

We even have float16 / float8 now for low-accuracy hi-throughput work.

Even float4. You get +/- 0, 0.5, 1, 1.5, 2, 3, Inf, and two values for NaN.

Come to think of it, the idea of -NaN tickles me a bit. "It's not a number, but it's a negative not a number".

I think you got that wrong, you got +Inf, -Inf and two NaNs, but they're both just NaN. As you wrote signed NaN makes no sense, though technically speaking they still have a sign bit.

Right, there's no -NaN. There are two different values of NaN. Which is why I tried to separate that clause, but maybe it wasn't clear enough.

IMO, floats model real observations.

And since there is no precision in nature, there shouldn't be precision in floats either.

So their odd behavior is actually entirely justified. This is why I can accept them.

I just gave up fighting. There is no system that is going to both fast and infinitely precision.

So long ago I worked in a game middleware company. One of the most common problems was skinning in local space vs global space. We kept having customers try and have global skinning and massive worlds, then upset by geometry distortion when miles away from the origin.

How do y'all solve that, out of curiosity?

I'm a hobbyist game dev and when I was playing with large map generation I ended up breaking the world into a hierarchy of map sections. Tiles in a chunk were locally mapped using floats within comfortable boundaries. But when addressing portions of the map, my global coordinates included the chunk coords as an extra pair.

So an object's location in the 2D world map might be ((122, 45), (12.522, 66.992)), where the first elements are the map chunk location and the last two are the precise "offset" coordinates within that chunk.

It wasn't the most elegant to work with, but I was still able to generate an essentially limitless map without floating point errors poking holes in my tiling.

I've always been curious how that gets done in real game dev though. if you don't mind sharing, I'd love to learn!

That's pretty neat. Game streaming isn't that different. It basically loads the adjacent scene blocks ready for you to wonder in that direction. Some load in LOD (Level Of Detail) versions of the scene blocks so you can see into the distance. The further away, the lower the LOD of course. Also, you shouldn't really keep the same origin, or you will hit the distort geometry issue. Have the origin as the centre of tha current block.

I know this is in jest, but if 0.1+0.2!=0.3 hasn't caught you out at least once, then you haven't even done any programming.

IMO they should just remove the equality operator on floats.

That should really be written as the gamma function, because factorial is only defined for members of Z. /s

Obviously floating point is of huge benefit for many audio dsp calculations, from my observations (non-programmer, just long time DAW user, from back in the day when fixed point with relatively low accumulators was often what we had to work with, versus now when 64bit floating point for processing happens more as the rule) - e.g. fixed point equalizers can potentially lead to dc offset in the results. I don't think peeps would be getting as close to modeling non-linear behavior of analog processors with just fixed point math either.

Audio, like a lot of physical systems, involve logarithmic scales, which is where floating-point shines. Problem is, all the other physical systems, which are not logarithmic, only get to eat the scraps left over by IEEE 754. Floating point is a scam!

Call me when you found a way to encode transcendental numbers.

May I propose a dedicated circuit (analog because you can only ever approximate their value) that stores and returns transcendental/irrational numbers exclusively? We can just assume they're going to be whatever value we need whenever we need them.

Wouldn't noise in the circuit mean it'd only be reliable to certain level of precision, anyway?

I mean, every irrational number used in computation is reliable to a certain level of precision. Just because the current (heh) methods aren't precise enough doesn't mean they'll never be.

You can always increase the precision of a computation, analog signals are limited by quantum physics.

Do we even have a good way of encoding them in real life without computers?

Just think about them real hard

While we're at it, what the hell is -0 and how does it differ from 0?

It's the negative version

So it's just like 0 but with an evil goatee?

Look at the graph of y=tan(x)+ⲡ/2

-0 and +0 are completely different.

From time to time I see this pattern in memes, but what is the original meme / situation?

It's my favourite format.

I think the original was 'stop doing math'

No real use you say? How would they engineer boats without floats?

Just invert a sink.

Serious answer: Posits seem cool, like they do most of what floats do, but better (in a given amount of space). I think supporting them in hardware would be awesome, but of course there's a chicken and egg problem there with supporting them in programming languages.

Posits aside, that page had one of the best, clearest explanations of how floating point works that I've ever read. The authors of my college textbooks could have learned a thing or two about clarity from this writer.

As a programmer who grew up without a FPU (Archimedes/Acorn), I have never liked float. But I thought this war had been lost a long time ago. Floats are everywhere. I've not done graphics for a bit, but I never saw a graphics card that took any form of fixed point. All geometry you load in is in floats. The shaders all work in floats.

Briefly ARM MCU work was non-float, but loads of those have float support now.

I mean you can tell good low level programmers because of how they feel about floats. But the battle does seam lost. There is lots of bit of technology that has taken turns I don't like. Sometimes the market/bazaar has spoken and it's wrong, but you still have to grudgingly go with it or everything is too difficult.

Floats make a lot of math way simpler, especially for audio, but then you run into the occasional NaN error.

On the PS3 cell processor vector units, any NaN meant zero. Makes life easier if there is errors in the data.

We even have

float16 / float8now for low-accuracy hi-throughput work.Even float4. You get +/- 0, 0.5, 1, 1.5, 2, 3, Inf, and two values for NaN.

Come to think of it, the idea of -NaN tickles me a bit. "It's not a number, but it's a negative not a number".

I think you got that wrong, you got +Inf, -Inf and two NaNs, but they're both just NaN. As you wrote signed NaN makes no sense, though technically speaking they still have a sign bit.

Right, there's no -NaN. There are two different values of NaN. Which is why I tried to separate that clause, but maybe it wasn't clear enough.

IMO, floats model real observations.

And since there is no precision in nature, there shouldn't be precision in floats either.

So their odd behavior is actually entirely justified. This is why I can accept them.

I just gave up fighting. There is no system that is going to both fast and infinitely precision.

So long ago I worked in a game middleware company. One of the most common problems was skinning in local space vs global space. We kept having customers try and have global skinning and massive worlds, then upset by geometry distortion when miles away from the origin.

How do y'all solve that, out of curiosity?

I'm a hobbyist game dev and when I was playing with large map generation I ended up breaking the world into a hierarchy of map sections. Tiles in a chunk were locally mapped using floats within comfortable boundaries. But when addressing portions of the map, my global coordinates included the chunk coords as an extra pair.

So an object's location in the 2D world map might be ((122, 45), (12.522, 66.992)), where the first elements are the map chunk location and the last two are the precise "offset" coordinates within that chunk.

It wasn't the most elegant to work with, but I was still able to generate an essentially limitless map without floating point errors poking holes in my tiling.

I've always been curious how that gets done in real game dev though. if you don't mind sharing, I'd love to learn!

That's pretty neat. Game streaming isn't that different. It basically loads the adjacent scene blocks ready for you to wonder in that direction. Some load in LOD (Level Of Detail) versions of the scene blocks so you can see into the distance. The further away, the lower the LOD of course. Also, you shouldn't really keep the same origin, or you will hit the distort geometry issue. Have the origin as the centre of tha current block.

I know this is in jest, but if 0.1+0.2!=0.3 hasn't caught you out at least once, then you haven't even done any programming.

IMO they should just remove the equality operator on floats.

That should really be written as the gamma function, because factorial is only defined for members of Z. /s

Obviously floating point is of huge benefit for many audio dsp calculations, from my observations (non-programmer, just long time DAW user, from back in the day when fixed point with relatively low accumulators was often what we had to work with, versus now when 64bit floating point for processing happens more as the rule) - e.g. fixed point equalizers can potentially lead to dc offset in the results. I don't think peeps would be getting as close to modeling non-linear behavior of analog processors with just fixed point math either.

Audio, like a lot of physical systems, involve logarithmic scales, which is where floating-point shines. Problem is, all the other physical systems, which are not logarithmic, only get to eat the scraps left over by IEEE 754. Floating point is a scam!

Call me when you found a way to encode transcendental numbers.

May I propose a dedicated circuit (analog because you can only ever approximate their value) that stores and returns transcendental/irrational numbers exclusively? We can just assume they're going to be whatever value we need whenever we need them.

Wouldn't noise in the circuit mean it'd only be reliable to certain level of precision, anyway?

I mean, every irrational number used in computation is reliable to a certain level of precision. Just because the current (heh) methods aren't precise enough doesn't mean they'll never be.

You can always increase the precision of a computation, analog signals are limited by quantum physics.

Do we even have a good way of encoding them in real life without computers?

Just think about them real hard

While we're at it, what the hell is -0 and how does it differ from 0?

It's the negative version

So it's just like 0 but with an evil goatee?

Look at the graph of y=tan(x)+ⲡ/2

-0 and +0 are completely different.

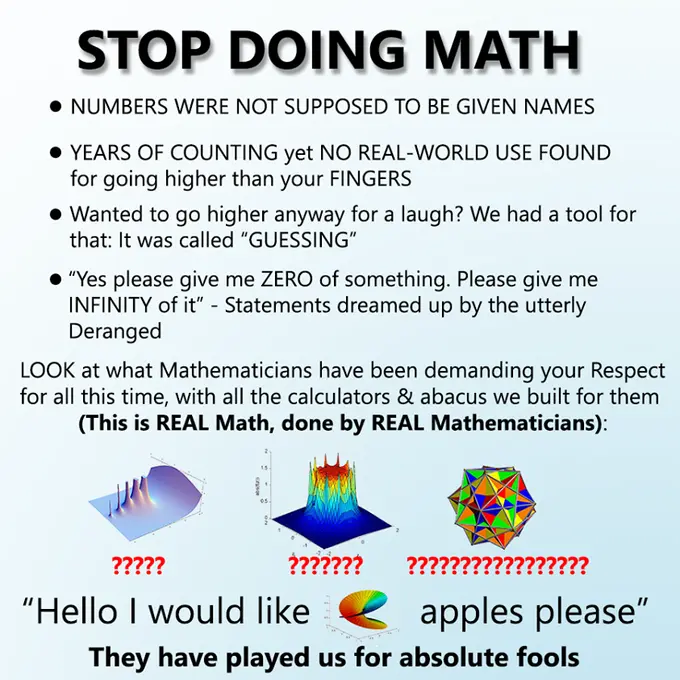

From time to time I see this pattern in memes, but what is the original meme / situation?

It's my favourite format. I think the original was 'stop doing math'