Redox OS 0.9.0 - Redox - Your Next(Gen) OS

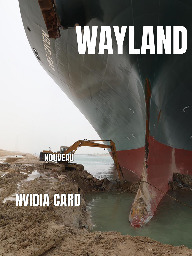

What a stupid article. It's like saying "stop using electric vehicles because you can't use gas stations". I don't understand why he's so adamant about this? It's not like Wayland had about 20 years of extra time to develop like X11. People keep working on it, and it takes time to polish things.

It's amazing that Linux gaming is becoming a thing that's better sometimes than Windows gaming (minus the getting banned part in some games). I also like that AMD is making some big pushes on open source drivers, plus their ROCm open-source alternative to CUDA.

This is a great time for Linux users! :)

Cheats nowadays don't even need to run on your machine. You can get a second computer that is connected to your computer via a capture card, analyze your video feed with an AI and send mouse commands wirelessly from it (mimicking the signal for your USB receiver).

These anti-cheats are nothing more than privacy invasion, and any game maker that believes they have the upper hand on people that want to cheat are very wrong.

Opening up anti-cheat support for Linux would at least make them more creative at finding these people from their behaviour, and not from analysing everything that's running in the background.

If you already have medical knowledge, why not look into bioinformatics? Cyber security would be a pretty big jump if you're not into tweaking computers as a hobby. For example, have you ever set up Linux on your own?

Certifications will give you a starting point, but it will take years for all the information to settle properly in your mind.

Wow, some of the comments on that article saying Google should have made Android closed source are mindboggling. They realize they never would have had their current worldwide marketshare if they did that, no?

But maybe if they did, we would have had more people working on true linux phones 🤔 I'm a bit torn on this one haha.

How will you move to WhatsApp if everyone else uses iMessage? Europe has the same issue, but reversed. Everyone uses WhatsApp and can't jump to Signal/Telegram because they're not as popular.

Piracy. I'd buy albums if I had money, though. I'll slowly phase into getting them once I get some more cash.

I can find most stuff I listen to, and I rarely grow my music library. I mostly listen to 20-30 albums, with some more mainstream music peppered in.

My music library currently sits at 90 gigabytes (mostly flacs), so quite small compared to others I've seen around here. Still, I have plenty of variation to keep me entertained :D

If you have Tidal, aren't there some apps to rip the lossless audio from there? You could get most of the stuff that you need, and then cancel the subscription. If you feel bad, maybe order some merch from the band, haha.

If it's really warm, you can't really blame her. It's just a brief period during the year when she'll be like this, so what's the harm? Not sure where you live, but I'd wear nothing but my underwear where I am right now.

I'd say the best course of action would be to say nothing and just ignore it. If my step father would say something like that to me, I'd feel a bit uncomfortable. It's up to you though, you know your family better than we do.

I've looked into this before, and it really depends on the type of RFID they use. Older versions have been cracked, but newer ones can't be copied over (easily or at all).

If your company is serious about security, you will not be able to put the content of the card on your phone. What newer, more secure versions of RFID do is receive a code from the reader system, replies to it internally, and then sends back the answer. Even if you try to copy this over, you will not be able to open the doors of your facility.

I think the first step should be to use one of these apps that can read RFID and see what protocol your card uses. If it's an unsecure one (i.e., only pushes out a code and checks it in their database that it's yours), you could probably try to copy it over. However, if it's not, you could also just dissolve the card with some acetone and place the resulting wires in your phone's case, near the bottom. Like that, it shouldn't interfere with your phone's NFC, as that one is usually next to the top area of your phone.

With the way current LLMs operate? The short answer is no. Most machine learning models can learn the probability distribution by performing backward propagation, which involves "trickling down" errors from the output node all the way back to the input. More specifically, the computer calculates the derivatives of each layer and uses that to slowly nudge the model towards the correct answer by updating the values in each neural layer. Of course, things like the attention mechanism resemble the way humans pay attention, but the underlying processes are vastly different.

In the brain, things don't really work like that. Neurons don't perform backpropagation, and, if I remember correctly, instead build proteins to improve the conductivity along the axons. This allows us to improve connectivity in a neuron the more current passes through it. Similarly, when multiple neurons in a close region fire together, they sort of wire together. New connections between neurons can appear from this process, which neuroscientists refer to as neuroplasticity.

When it comes to the Doom example you've given, that approach relies on the fact that you can encode the visual information to signals. It is a reinforcement learning problem where the action space is small, and the reward function is pretty straight forward. When it comes to LLMs, the usual vocabulary size of the more popular models is between 30-60k tokens (these are small parts of a word, for example "#ing" in "writing"). That means, you would need a way to encode the input of each to feed to the biological neural net, and unless you encode it as a phonetic representation of the word, you're going to need a lot of neurons to mimic the behaviour of the computer-version of LLMs, which is not really feasible. Oh, and let's not forget that you would need to formalize the output of the network and find a way to measure that! How would we know which neuron produces the output for a specific part of a sentence?

We humans are capable of learning language, mainly due to this skill being encoded in our DNA. It is a very complex problem that requires the interaction between multiple specialized areas: e.g. Broca's (for speech), Wernicke's (understanding and producing language), certain bits in the lower temporal cortex that handle categorization of words and other tasks, plus a way to encode memories using the hippocampus. The body generates these areas using the genetic code, which has been iteratively improved over many millennia. If you dive really deep into this subject, you'll start seeing some scientists that argue that consciousness is not really a thing and that we are a product of our genes and the surrounding environment, that we act in predefined ways.

Therefore, you wouldn't be able to call a small neuron array conscious. It only elicits a simple chemical process, which appears when you supply enough current for a few neurons to reach the threshold potential of -55 mV. To have things like emotion, body autonomy and many other things that one would think of when talking about consciousness, you would need a lot more components.

I see your point. However, integrating Rust properly in the Linux kernel is an uphill battle. Redox OS is not at all close to being stable, but it showcases that you can build a Rust kernel from scratch, and integrate it into an OS that meets some of the requirements of a modern one. Of course, considering it a toy project and glancing over its potential doesn't help with adoption. They even mention in their description that currently they can only support a community manager and a student developer with the current donations. When you compare that to the amount of money and developers involved in the Linux kernel, it's insignificant.

I was not suggesting that the Rust For Linux devs jump ship, but it could be beneficial for the investors behind the project to look at alternatives. Heck, the Linux kernel started as a toy project itself. I believe that a team focused solely on such a Rust-only kernel could spearhead needed changes to reach something stable, as opposed to investing time and money into fighting established C developers to integrate a memory-safe language in the kernel fully.

Did you even read the article? In a report they said they had been "eliminated" for being "terrorists". All for doing 300$ worth of damage. Two kids dead, just for 300$.

They didn't even let them leave when their parents wanted to take them to another country.

A duck walked up to a soup stand, and he said to the man running the stand...

You can do it here too! Just tag @remindme@mstdn.social :)

The Framework 13 inch model should be plenty, especially if you want to dev on the go. Much more lightweight and smaller, and you can connect it to external monitors if the screen size is not big enough. Also, you shouldn't have issues running Linux on either laptops.

Instead of going for the 16 version, I would use the extra 900-1000 euros (that's the amount I saw I could save between the two almost maxed-out models) to make a dedicated server or mini-cluster to run your workloads. Deploy Kubernetes or Proxmox on it, and you'll also get some more practice on it outside work if you want to run stuff for your home lab. That is only if you don't want to game on your laptop, but I'd still put that money aside to make a desktop.

And for some reason you still can't charge transport cards online or with a credit/debit card if you don't have a japanese phone. Think that's coming in 2035 at this rate? 🤣

He's the chosen one, of course it's supposed to look like that!

Why is that a problem?

What db2 already said. Microsoft just released Phi-3 mini, which could, allegedly, run locally on newer smartphones.

If I understood correctly, the Rabbit thingy just captures your information locally and then forwards it to their server. So, if you want more power, you could probably do the same by submitting the same info to a bigger open source model than Phi-3, like Llama 3, hosted on your homelab. I believe you can set it up with huggingface/gradio, which sort of provides an API that you could use.

That way, you don't need a shitty orange box, and can always get the latest open source models with a few lines of code. There are plenty of open source frameworks in the works at the moment, and I believe that we're not far off from having multi-modal LLMs running on homelab-level hardware (if you don't mind a bit of lag).

::: spoiler Click for longer opinion

If I remember correctly, even though Fuchsia is used in production, it is mainly targetting mobile or IoT devices. Nevertheless, the underlying micro-kernel, Zircon, is written in C/C++, which differs from Redox. Now, I'm not saying that Redox solves everything by writing the kernel in Rust. It will require plenty unsafe blocks to achieve what it needs, but it makes you aware beforehand that you should be careful about how you implement that bit of code. Having this clear marking could also make the kernel code review process more likely to catch issues.

Disregarding this, if I am not mistaken, Redox aims to be a drop-in replacement for Linux one day, both for desktop and server, while Fuchsia only wishes to be integrated in/replace Android. Linux is perfectly fine for most use cases, I am not suggesting otherwise! However, given how many issues resulted from overflow/memory corruption issues that could have been potentially easier to identify if Rust (or any other memory safe language) was used, you'd think that there is incentive to rely on it for kernel development. Linus himself made this decision as well when allowing Rust to be used in the Linux kernel development (albeit perhaps a bit too early). :::

The Linux kernel is not flawed, and Redox is probably years away from being even near it. However, having memory-safety from the get-go as a requirement for developing the kernel could lead to fewer exploits, compared to what we have today with Linux. Just as you've said, most users are not aware of it/they don't care, but the big players will care about keeping information safe on their servers. Just to conclude, Redox OS is not just Linux rewritten in Rust, and could potentially have many other benefits that are particularly juicy for data centers. Too bad it's not production ready yet :D

Yeah, it's the Osaifu-Keitai. Apple has it enabled for all phones on the market, while Android phone manufacturers avoid adding it to theirs outside Japan because they would have to pay fees to Sony for it. The funny part is that Sony itself doesn't enable it for phones outside Japan, even though FeliCa is a subsidiary of Sony :D Another funny bit is that some phones, like the Pixel, are capable of running it on phones made for other markets. Some users were able to force the Osaifu-Keitai app to think the phone was made in Japan, and that was all it took to enable it (although you'd have to root your phone + the manufacturer should have released their phones in Japan, to ensure the chip is capable). So, yeah, although a few years ago it might have been a specific chip being needed in the phone, nowadays it's mostly software that doesn't allow you to use the one you have while in Japan.

All in all, PASMO/Suica/etc is basically a very limited debit card company haha. I guess Japanese people enjoy using it mainly because it puts a cap on how much they can spend (iirc, about 100 euros allowed at once on the card). Japan is a highly consumerist society, so this format was probably adopted (instead of credit/debit cards) mainly to combat it somewhat :D

It's a cooling block for the GPU, and apparently it can knock off up to 20 degrees. However, it is crazy expensive, like 900$.

Are you sure about them being removed from the platform? I purchased GTA: San Andreas before the shitty remaster came out, and I can still download it. It is no longer available/purchasable, but I still "own" it. Do you have a better example, as I haven't really heard of this happening before?

But yeah, all the other points you mention are valid. GOG is better in this regard, but their platform is nowhere near the level of Steam in terms of user experience.

That is good to know. Tried the free version of Roll20 before, and it definitely felt lacking in certain areas. Oh, and thanks for letting me know about the sale! I'll definitely keep an eye out for that one :)

Good luck! You can try the huggingface-chat repo, or ollama with this web-ui. Both should be decent, as they have instructions to set up a docker container.

I believe the Llama 3 models are out there in a torrent somewhere, but I didn't dig to find it. For the 70B model, you'll probably need around 64GB of RAM available, but the 7B one should run fine with just 8GB. It will be somewhat slow though, compared to the ChatGPT experience. The self-attention mechanism can be parallelized, which is why you will see much better results on a GPU. According to some others that tested it, if you offload some stuff to RAM, you could see ~10-12 tokens per second on an RTX 3090 for certain 70B models. But more capable ones will be at less than 1 token per second, all depending on the context window you use.

If you don't have a GPU available, just give the Phi-3 model a try :D If you quantize it to 4 bits, it can apparently get 12 tokens per second on an iPhone haha. It should play nice with pooling information from a search engine, or a vector database like milvus, qdrant or chroma.

That's unfortunate :( I think you can still run it in QEMU, if you're interested.

I'm really sorry to hear that. I hope you have enough support to deal with it!

Regarding bioinformatics, it doesn't have to be a human-centered job. You can get into the data science aspect of it, and make good money off of helping research diseases, for example. This could also be a remote job, and you'd probably have an easier time getting into it. For data science, you can get quite far with Python, which is easier to pick up when compared with other languages.

You can also explore your options further by just asking ChatGPT, and seeing what the potential job requirements would be. It's decent if you want to brainstorm some stuff, but do look up the information yourself on search engines. Write there your experience, what you'd want, and what to expect if you were to jump in that field. Perhaps this could help you decide better.

I wish you the best of luck!

That's good to know! The format overall was nice. They got a few questions wrong, but the users seem to provide the correct answers in the discussion.

I'd pay, but I'm not really in a position to do it right now. Also, I can't justify doing it for only a single exam, when the price for a month is 40$, and I only need it for one day haha.

Framework laptops are getting better. Not Apple levels good, but it certainly beats them in average longevity.

The only hope with Apple is having the EU step in again to stop this kind of bullcrap.