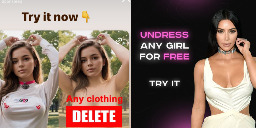

Instagram Advertises Nonconsensual AI Nude Apps

Instagram is profiting from several ads that invite people to create nonconsensual nude images with AI image generation apps, once again showing that some of the most harmful applications of AI tools are not hidden on the dark corners of the internet, but are actively promoted to users by social media companies unable or unwilling to enforce their policies about who can buy ads on their platforms.

While parent company Meta’s Ad Library, which archives ads on its platforms, who paid for them, and where and when they were posted, shows that the company has taken down several of these ads previously, many ads that explicitly invited users to create nudes and some ad buyers were up until I reached out to Meta for comment. Some of these ads were for the best known nonconsensual “undress” or “nudify” services on the internet.

Something like this could be career ending for me. Because of the way people react. “Oh did you see Mrs. Bee on the internet?” Would have to change my name and move three towns over or something. That’s not even considering the emotional damage of having people download you. Knowledge that “you” are dehumanized in this way. It almost takes the concept of consent and throws it completely out the window. We all know people have lewd thoughts from time to time, but I think having a metric on that…it would be so twisted for the self-image of the victim. A marketplace for intrusive thoughts where anyone can be commodified. Not even celebrities, just average individuals trying to mind their own business.

Exactly. I’m not even shy, my boobs have been out plenty and I’ve sent nudes all that. Hell I met my wife with my tits out. But there’s a wild difference between pictures I made and released of my own will in certain contexts and situations vs pictures attempting to approximate my naked body generated without my knowledge or permission because someone had a whim.

I think this is why it's going to be interesting to see how we navigate this as a society. So far, we've done horribly. It's been over a century now that we've acknowledged sexual harassment in the workplace is a problem that harms workers (and reduces productivity) and yet it remains an issue today (only now we know the human resources department will protect the corporate image and upper management by trying to silence the victims).

What deepfakes and generative AI does is make it easy for a campaign staffer, or an ambitious corporate later climber with a buddy with knowhow, or even a determined grade-school student to create convincing media and publish it on the internet. As I note in the other response, if a teen's sexts get reported to law enforcement, they'll gladly turn it into a CSA production and distribution issue and charge the teens themselves with serious felonies with long prison sentences. Now imagine if some kid wanted to make a rival disappear. Heck, imagine the smart kid wanting to exact revenge on a social media bully, now equipped with the power of generative AI.

The thing is, the tech is out of the bag, and as with princes in the mid-east looking at cloned sheep (with deteriorating genetic defects) looking to create a clone of himself as an heir, humankind will use tech in the worst, most heinous possible ways until we find cause to cease doing so. (And no, judicial punishment doesn't stop anyone). So this is going to change society, whether we decide collectively that sexuality (even kinky sexuality) is not grounds to shame and scorn someone, or that we use media scandals the way Italian monastics and Russian oligarchs use poisons, and scandalize each other like it's the shootout at O.K. Corral.

Thanks, I liked this reply. There is a lot of nuance here.

I think you might be overreacting, and if you're not, then it says much more about the society we are currently living in than this particular problem.

I'm not promoting AI fakes, just to be clear. That said, AI is just making fakes easier. If you were a teacher (for example) and you're so concerned that a student of yours could create this image that would cause you to pick up and move your life, I'm sad to say they can already do this and they've been able to for the last 10 years.

I'm not saying it's good that a fake, or an unsubstantiated rumor of an affair, etc can have such big impacts on our life, but it is troubling that someone like yourself can fear for their livelihood over something so easy for anyone to produce. Are we so fragile? Should we not worry more about why our society is so prudish and ostracizing to basic human sexuality?

None of that is relevant. The issue being discussed here isn't one of whether or not it's currently possible to create fake nudes.

The original post being replied to indicated that, since AI, an artist, a photoshopper, whatever, is just creating an imaginary set of genitalia, and they have no ability to know if it's accurate or not, there is no damage being done. That's what people are arguing about.

The society we are living in can be handling things incorrectly but it can absolutely have real-world damaging effects. As a collective we should worry about our society, but individuals absolutely are and should be justified in worrying about their lives being damaged by this.