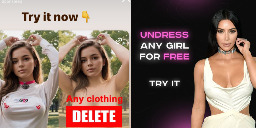

Instagram Advertises Nonconsensual AI Nude Apps

Instagram is profiting from several ads that invite people to create nonconsensual nude images with AI image generation apps, once again showing that some of the most harmful applications of AI tools are not hidden on the dark corners of the internet, but are actively promoted to users by social media companies unable or unwilling to enforce their policies about who can buy ads on their platforms.

While parent company Meta’s Ad Library, which archives ads on its platforms, who paid for them, and where and when they were posted, shows that the company has taken down several of these ads previously, many ads that explicitly invited users to create nudes and some ad buyers were up until I reached out to Meta for comment. Some of these ads were for the best known nonconsensual “undress” or “nudify” services on the internet.

It’s all so incredibly gross. Using “AI” to undress someone you know is extremely fucked up. Please don’t do that.

I'm going to undress Nobody. And give them sexy tentacles.

Behold my meaty, majestic tentacles. This better not awaken anything in me...

Same vein as "you should not mentally undress the girl you fancy". It's just a support for that. Not that i have used it.

Don't just upload someone else's image without consent, though. That's even illegal in most of europe.

Why you should not mentally undress the girl you fancy (or not, what difference does it make?)? Where is the harm of it?

there is none, that's their point

Would it be any different if you learn how to sketch or photoshop and do it yourself?

You say that as if photoshopping someone naked isnt fucking creepy as well.

Creepy, maybe, but tons of people have done it. As long as they don't share it, no harm is done.

I dont think that many have dude. Like sure, if you're talking total number and not percentage, but this planet has so many people you could also claim that tons of people are pedophiles too

Lol you'd be surprised...isn't this one of those things people would do in private but never admit in public (because of people likr you getting all touchy and creeped out by it)?

You say this like we SHOULDN'T be creeped out that you are digitally undressing someone without their permission

You can feel whatever you want.

Okie, I feel your a creep that shouldn't be allowed within 100m of a school

Everyone has imagined someone naked at some point. Do you feel the same? How do you feel about yourself?

Creepy to you, sure. But let me add this:

Should it be illegal? No, and good luck enforcing that.

You're at least right on the enforcement part, but I dont think the illegality of it should be as hard of a no as you think it is

Yes, because the AI (if not local) will probably store the images on their Servers

good point

This is the only good answer.

This is also fucking creepy. Don't do this.

I am not saying anyone should do it and don't need some internet stranger to police me thankyouverymuch.

Can you articulate why, if it is for private consumption?

Consent.

You might be fine with having erotic materials made of your likeness, and maybe even of your partners, parents, and children. But shouldn't they have right not to be objectified as wank material?

I partly agree with you though, it's interesting that making an image is so much more troubling than having a fantasy of them. My thinking is that it is external, real, and thus more permanent even if it wouldn't be saved, lost, hacked, sold, used for defamation and/or just shared.

To add to this:

Imagine someone would sneak into your home and steal your shoes, socks and underwear just to get off on that or give it to someone who does.

Wouldn't that feel wrong? Wouldn't you feel violated? It's the same with such AI porn tools. You serve to satisfy the sexual desires of someone else and you are given no choice. Whether you want it or not, you are becoming part of their act. Becoming an unwilling participant in such a way can feel similarly violating.

They are painting and using a picture of you, which is not as you would like to represent yourself. You don't have control over this and thus, feel violated.

This reminds me of that fetish, where one person is basically acting like a submissive pet and gets treated like one by their "master". They get aroused by doing that in public, one walking with the other on a leash like a dog on hands and knees. People around them become passive participants of that spectactle. And those often feel violated. Becoming unwillingly, unasked a participant, either active or passive, in the sexual act of someone else and having no or not much control over it, feels wrong and violating for a lot of people.

In principle that even shares some similarities to rape.

There are countries where you can't just take pictures of someone without asking them beforehand. Also there are certain rules on how such a picture can be used. Those countries acknowledge and protect the individual's right to their image.

Just to play devils advocate here, in both of these scenarios:

The person has the knowledge that this is going on. In he situation with AI nudes, the actual person may never find out.

Again, not to defend this at all, I think it's creepy af. But I don't think your arguments were particularly strong in supporting the AI nudes issue.

In every chat I find about this, I see people railing against AI tools like this but I have yet to hear an argument that makes much sense to me about it. I don’t care much either way but I want a grounded position.

I care about harms to people and in general, people should be free to do what they want until it begins harming someone. And then we get to have a nuanced conversation about it.

I’ve come up with a hypothetical. Let’s say that you write naughty stuff about someone in your diary. The diary is kept in a secure place and in private. Then, a burglar breaks in and steals your diary and mails that page to whomever you wrote it about. Are you, the writer, in the wrong?

My argument would be no. You are expressing a desire in private and only through the malice of someone else was the harm done. And no, being “creepy” isn’t an argument either. The consent thing I can maybe see but again do you have a right not to be fantasized about? Not to be written about in private?

I’m interested in people’s thoughts because this argument bugs me not to have a good answer for.

Yeah it's an interesting problem.

If we go down the path of ideas in the mind and the representations we create and visualize in our mind's eye, to forbid people from conceiving of others sexually means there really is no justification for conceiving of people generally.

If we try to seek for a justification, where is that line drawn? What is sexual, and what is general? How do we enforce this, or at least how do we catch people in the act and shame them into stopping their behavior, especially if we don't possess the capability of telepathy?

What is harm? Is it purely physical, or also psychological? Is there a degree of harm that should be allowed, or that is inescapable despite our best intentions?

The angle that you point out regarding writing things down about people in private can also go different ways. I write things down about my friends because my memory sucks sometimes and I like to keep info in my back pocket for when birthdays, holidays, or special occasions come. What if I collected information about people that I don't know? What if I studied academics who died in the past to learn about their lives, like Ben Franklin? What if I investigated my neighbors by pointing cameras at their houses, or installing network sniffers or other devices to try to collect information on them? Does the degree of familiarity with those people I collect information about matter, or is the act wrong in and of itself? And do my intentions justify my actions, or do the consequences of said actions justify them?

Obviously I think it's a good thing that we as a society try to discourage collecting information on people who don't want that information collected, but there is a portion of our society specifically allowed to do this: the state. What makes their status deserving of this power? Can this power be used for ill and good purposes? Is there a level of cross collection that can promote trust and collaboration between the state and its public, or even amongst the public itself? I would say that there is a level where if someone or some group knows enough about me, it gets creepy.

Anyways, lots of questions and no real answers! I'd be interested in learning more about this subject, and I apologize if I steered the convo away from sexual harassment and violation. Consent extends to all parts of our lives, but sexual consent does seem to be a bigger problem given the evidence of this post. Looking forward to learning more!

I think we’ve just stumbled on an issue where the rubber meets the road as far as our philosophies about privacy and consent. I view consent as important mostly in areas that pertain to bodily autonomy right? So we give people the rights to use our likeness for profit or promotion or distribution. And what we’re giving people is a mental permission slip to utilize the idea of the body or the body itself for specific purposes.

However, I don’t think that these things really pertain to private matters. Because the consent issue only applies when there are potential effects on the other person. Like if I talk about celebrities and say that imagining a celebrity sexually does no damage because you don’t know them, I think most people would agree. And so if what we care about is harm, there is no potential for harm.

With surveillance matters, the consent does matter because we view breaching privacy as potential harm. The reason it doesn’t apply to AI nudes is that privacy is not being breached. The photos aren’t real. So it’s just a fantasy of a breach of privacy.

So for instance if you do know the person and involve them sexually without their consent, that’s blatantly wrong. But if you imagine them, that doesn’t involve them at all. Is it wrong to create material imaginations of someone sexually? I’d argue it’s only wrong if there is potential for harm and since the tech is already here, I actually view that potential for harm as decreasing in a way. The same is true nonsexually. Is it wrong to deepfake friends into viral videos and post them on twitter? Can be. Depends. But do it in private? I don’t see an issue.

The problem I see is the public stuff. People sharing it. And it’s already too late to stop most of the private stuff. Instead we should focus on stopping AI porn from being shared and posted and create higher punishments for ANYONE who does so. The impact of fake nudes and real nudes is very similar, so just take them similarly seriously.

What I find interesting is that for me personally, writing the fantasy down (rather than referring to it) is against the norm, a.k.a. weird, but not wrong.

Painting a painting of it is weird and iffy, hanging it in your home is not ok.

It's strange how it changes along that progression, but I can't rightly say why.

Not necessarily, no. It could be that they might just think they've misplaced their socks. If you've lived in an apartment building with shared laundry spaces, it's not so uncommon to loose some minor parts of clothing. But just because they don't get to know about it, it's not less wrong or should be less illegal.

Also in connection with my remarks before:

A lot of our laws also apply even if no one is knowingly damaged (yet). (May of course depend on the legislation of wherever you live.)

Already intending to commit a crime can sometimes be reason enough to bring someone to court.

We can argue how much sense that makes of course, but at the current state, we, as a society, decided that doing certain things should be illegal, even if the damage has not manifested yet. And I see many good points to handle it that way with such AI porn tools as well.

Traumatizing rape victims with non consentual imagery of them naked and doing sexual things with others and sharing it is totally not going yo fuck up the society even more and lead to a bunch of suicides! /s

Ai is the future. The future is dark.

tbf, the past and present are pretty dark as well

That's why we need strong legislation. Most countries wordlwide are missing crucial time frames for making such laws. At least some are catching up, like the EU did recently with their first AI act.

Though just like your thoughts, the AI is imagining the nude parts aswell because it doesn't actually know what they look like. So it's not actually a nude picture of the person. It's that person's face on a entirely fictional body.

But the issue is not with the AI tool, it's with the human wielding it for their own purposes which we find questionable.

An exfriend of mine Photoshopped nudes of another friend. For private consumption. But then someone found that folder. And suddenly someones has to live with the thought that these nudes, created without their consent, were used as spank bank material. Its pretty gross and it ended the friendship between the two.

You can still be wank material with just your Facebook pictures.

Nobody can stop anybody from wanking on your images, AI or not.

Related Louis CK

Thats already weird enough, but there is a meaningful difference between nude pictures and clothed pictures. If you wanna whack one to my fb pics of me looking at a horse, ok, weird. Dont fucking create actual nude pictures of me.

Louis CK has also sexually harassed a number of women( jerking off in front of unwilling viewers)...

Here is an alternative Piped link(s):

Related Louis CK

Piped is a privacy-respecting open-source alternative frontend to YouTube.

I'm open-source; check me out at GitHub.

If you have to ask, you're already pretty skeevy.

And if you have to say that, you're already sounding like some judgy jerk.

The fact that you do not even ask such questions, shows that you are narrow minded. Such mentality leads to people thinking that “homosexuality is bad” and never even try to ask why, and never having chance of changing their mind.

They cannot articulate why. Some people just get shocked at "shocking" stuff... maybe some societal reaction.

I do not see any issue in using this for personal comsumption. Yes, I am a woman. And yes people can have my fucking AI generated nudes as long as they never publish it online and never tell me about it.

The problem with these apps is that they enable people to make these at large and leave them to publish them freely wherever. This is where the dabger lies. Not in people jerking off to a picture of my fucking cunt alone in a bedroom.

Mhmm. Cool.

It's creepy and can lead to obsession, which can lead to actual harm for the individual.

I don't think it should be illegal, but it is creepy and you shouldn't do it. Also, sharing those AI images/videos could be illegal, depending on how they're represented (e.g. it could constitute libel or fraud).

I disagree. I think it should be illegal. (And stay that way in countries where it's already illegal.) For several reasons. For example, you should have control over what happens with your images. Also, it feels violating to become unwillingly and unasked part of the sexual act of someone else.

That sounds problematic though. If someone takes a picture and you're in it, how do they get your consent to distribute that picture? Or are they obligated to cut out everyone but those who consent? What does that mean for news orgs?

That seems unnecessarily restrictive on the individual.

At least in the US (and probably lots of other places), any pictures taken where there isn't a reasonable expectation of privacy (e.g. in public) are subject to fair use. This generally means I can use it for personal use pretty much unrestricted, and I can use it publicly in a limited capacity (e.g. with proper attribution and not misrepresented).

Yes, it's creepy and you're justified in feeling violated if you find out about it, but that doesn't mean it should be illegal unless you're actually harmed. And that barrier is pretty high to protect peoples' rights to fair use. Without fair use, life would suck a lot more than someone doing creepy things in their own home with pictures of you.

So yeah, don't do creepy things with other pictures of other people, that's just common courtesy. But I don't think it should be illegal, because the implications of the laws needed to get there are worse than the creepy behavior of a small minority of people.

Can you provide an example of when a photo has been taken that breaches the expectation of privacy that has been published under fair use? The only reason I could think that would work is if it's in the public interest, which would never really apply to AI/deepfake nudes of unsuspecting victims.

I'm not really sure how to answer that. Fair use is a legal term that limits the "expectation of privacy" (among other things), so by definition, if a court finds it to be fair use, it has also found that it's not a breach of the reasonable expectation of privacy legal standard. At least that's my understanding of the law.

So my best effort here is tabloids. They don't serve the public interest (they serve the interested public), and they violate what I consider a reasonable expectation of privacy standard, with my subjective interpretation of fair use. But I disagree with the courts quite a bit, so I'm not a reliable standard to go by, apparently.

Fair use laws relate to intellectual property, privacy laws relate to an expectation of privacy.

I'm asking when has fair use successfully defended a breach of privacy.

Tabloids sometimes do breach privacy laws, and they get fined for it.

Right, they're orthogonal concepts. If something is protected by fair use laws, then privacy laws don't apply. If privacy laws apply, then it's not fair use.

The proper discussion in this area is around libel law. That's where tabloids are usually sued, not for fair use or "privacy violations." For a libel suit to succeed, the plaintiff must prove that the defendant made false statements that caused actual harm to the plaintiff's reputation. There are a bunch of lawsuits going on right now examining deep fakes and similarly allegedly libelous use of an individuals likeness. For a specific example, look at the Taylor Swift lawsuit around deep fake porn.

But the crux of the matter is that you ain't have a right to your likeness, generally speaking, and fair use laws protects creepy use of legally acquired representations of your likeness.

The Deepfake stuff is interesting from a legal standpoint, and that is essentially the topic of this thread. When Deepfake first became a thing, many companies (like Reddit) chose to ban the content; they did so voluntarily, perhaps a mix of morality and liability issues.

What I referred to regarding tabloids is that there have been many cases of paparazzi being fined for breaches of privacy, not libel. From my studies I recall a good example being "if you need a ladder to see over someone's fence you are invading the expectation of privacy". This was before drones were a thing so I don't know how it applies these days.

I agree that you should have control of your likeness, but I don't think it is as protected as your comment suggests.

Privacy violations fall under illegally acquiring content, which is not subject to free use.

But if I obtain it legally, I either own it (e.g. I took the picture) or am subject to free use restrictions (e.g. I got it from your website or something). In that case, your only real way to stop me is with libel law (and similar). If I manipulate the video in such a way as to make false, damaging statements about you, you can sue for libel.

That's my point. So if I only use legally obtained pictures, I can do whatever I want with those for my own personal use. If I share them, I may be subject to libel laws, fair use laws (e.g. if I took it from your website), etc.

So I don't see a realistic defense on privacy grounds for deep fakes, it's going to be fraud and libel laws, assuming the source pictures were obtained legally. The pictures being creepy isn't the issue, at least as far as the courts are concerned.