Study featuring AI-generated giant rat penis retracted entirely, journal apologizes

vice.com

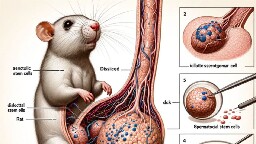

Study featuring AI-generated giant rat penis retracted entirely, journal apologizes::A peer-reviewed study featured nonsensical AI images including a giant rat penis in the latest example of how generative AI has seeped into academia.

Me:

Impactful world news: Pass

Troubling local US news: Pass

News about giant rat penis: Click

sips coffee slowly

I hate the way AI is being used here, but those labels are fucking GOLD.

It's like trying to read in a dream

At least it correctly labelled the Rat, but kinda missed the dck

See Figure 1 for a diagram of retat dck

I loved the labels. Testtomcels.

Ever tried to see what happens when you request “an anatomical diagram of a spider, school book style”. I mean, just start by counting the legs, and once you’ve stopped laughing you can dive into the labels. It’s going to be wild. If you’re into microbiology, try asking for a similar diagram of a prokaryotic cell for extra giggles.

Well that’s a headline I didn’t expect to see this morning.

Regardless of the rights and wrongs of AI generated images, it’s quite concerning something like this makes it into a scientific journal at all.

Yeah, the Journal is at a huge loss of credibility with this. Their entire purpose is to be respectable and review submissions with a high degree of scrutiny.

Frontiers in isn't the greatest journal to begin with

Stuart

LittleBigStuart Hugh Mungus

It's not so much the use of AI that's upsetting as it is the "peer review" process. There needs to be a massive change in how journals review studies, before reasonable people start to question every study based on cases like this. How many false studies are currently used for important shit that we just haven't caught yet?

It got published, people noticed it, people saw it was bullshit, it got retracted. Publishing is not the end of the line.

It's an extreme example, but it's still an example of the system working in the end. Reasonable people are supposed to question what they read, not blindly trust it, that's how you catch "important shit".

The problem is not that some bad papers get published. The problem would be them staying unchallenged. And it's also a problem that laymen consider one random study is an undeniable proof of their argument (potentially ignoring the thousands of studies contradicting it).

Of course some things will always slip through the cracks, but this is egregious. What does their peer-review process look like that this passed through it?

Right? Even when skimming papers, it's usually: read title & abstract, look at figures, skim results & conclusion. If you don't notice that the figure doesn't have real words, how is anyone making sure the methodology makes sense? That the results show what the conclusion says they show?

I am not disagreeing that this is ridiculous, I was just saying that this stupidity is not what should convince people not to take some random paper for an absolute truth, just because it was published.

Even if you eliminate fraud, bullshit and even honest mistakes, that's just not how science works.

A shame most people are trained by both the school system and society to just take things at face value

An even greater shame is that almost no people are trained on basic statistics and think they can debunk a published study in PNAS with a Google search and some random guys blog.

A few things came together for me here.

.

.

.

They don't outright say it in the article, but it looks like the reviewer based in India was the one who actually raised concerns about the garbage images. The authors were supposed to respond, but didn't, and the journal published anyway.

I will readily admit that this is just my own conclusion here, but -- I wonder if there was an element of racism that went into ignoring the reviewer's concerns?

Why do you bring up race? Is there anything that would imply that?

People are lazy and incompetent as fuck, and it's been an industry wide problem that publishing companies in general have lower and lower standards of quality.

I brought it up purely as speculation, as one possible explanation for why the process was not properly followed. I don't have any experience with publishing companies, whether for science journals or otherwise.

He didn’t bring up race, he brought up location. Like, you’re the one that brought up race? If it was one American reviewer and one Australian reviewer and this poster said “the Austrian caught it”, would you have made the same comment you just did?

What if the “reviewer based in India” is white?

Edit: I am a ijit, I actually agree with you, and my “what if person based in india is white” should be directed at the guy you’re replying to.

He literally said

So...

I retract my statement, as I cannot read.

Happens to all of us from time to time.

Check out their controversies section on Wikipedia. This doesn't seem out of character for this publication. It's more likely incompetence than malice.

So many questions!

AI generated medical research can't make it past peer review, it can't hurt you

AI generated medical research that made it past peer review:

I mean "made it past"...

The 2 reviewers both brought up the images as weird, and the journal published anyways, so...

Giant rat penises will only hurt you if you have an underlying medical condition (anal fissures, etc).

ai is going to speedrun us into idocracy, isn’t it? why learn when you can ask dr. sbaitso to just do it for you?

It's certainly not helping.

We're already dealing with the problem of half the (US) population only believing things when they align with their political views and now on can't even Google something and be sure that the entire first page of results isn't SEO AI hallucinated misinformation.

Omg, we are in a cyberpunk dystopia aren’t we?

Peer review was already a joke, as exposed a couple years ago by two researchers who got a paper full of BS published.

It's been wells established that nearly all published research papers are irreproducible.

How long until there’s an accepted study of the benefits of electrolytes on plants. Probably already exists in gatoraids filing cabinet.

Doctooore Sbaitso, please enter your name.

Man I wondered if I'd ever talk to anyone else that used it. I liked asking him to pronounce "abcdefghijklmnopqrstuvwxyz", and he actually did a pretty good job.

I support a law that all AI voices must use the Dr. Sbaitso voice. Imagine the impressive inefficiency of training an AI voice with the output from a 1980’s? Sound blaster.

I was out in the snow and mine retracted entirely as well.

"I WAS IN THE POOL!!!!!"

The paper was authored by three scientists in China, edited by a researcher in India, reviewed by two people from the U.S. and India, and published in the open access journal Frontiers in Cell Development and Biology on Monday.

Now THAT is Maximum Trolling. I hope someone at Cell Development got fired

South Park strikes again

Jesus, talk about getting rat fucked.

Time for a "dick of a rat" joke

At first I was like "Why" and then I realized the study was about rat penises and not about AI so now I'm furious and I hope that researcher's school rescinds his degrees.

Trust the science

LGTM