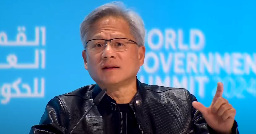

Don’t learn to code: Nvidia’s founder Jensen Huang advises a different career path

vulcanpost.com

Don’t learn to code: Nvidia’s founder Jensen Huang advises a different career path::Don't learn to code advises Jensen Huang of Nvidia. Thanks to AI everybody will soon become a capable programmer simply using human language.

Founder of company which makes major revenue by selling GPUs for machine learning says machine learning is good.

Yes but Nvidia relies heavily on programmers themselves. Without them Nvidia wouldn't have a single product. The fact that he despite this makes these claims is worth taking note.

Lol. They're at the top of the food chain. They can afford the best developers. They do not benefit from competition. As with all leading tech corporations, they are protectionist, and benefit more from stifling competition than from innovation.

Also, more broadly the oligarchy don't want the masses to understand programming because they don't want them to fundamentally understand logic, and how information systems work, because civilization is an information system. It makes more sense when you realize Linux/FOSS is the socialism of computing, and anti-competitive closed source corporations like Nvidia (notorious for hindering Linux and FOSS) are the capitalist class of computing.

Techno-Mage has been trying to warn us this whole time.

It doesn’t make him wrong.

Just like we can now uss LLM to create letters or emails with a tone, it’s not going to be a big leap to allow it to do similar with coding. It’s quite exciting, really. Lots of people have ideas for websites or apps but no technical knowledge to do it. AI may allow it, just like it allows non artists to create art.

I use AI to write code for work every day. Many different models and services, including https://ollama.ai on my own hardware. It's useful for a developer when they can take the code and refactor it to fit into large code-bases (after fixing its inevitable broken code here and there), but it is by no means anywhere close to actually successfully writing code all on its own. Eventually maybe, but nowhere near anytime soon.

Agreed. I mainly use it for learning.

Instead of googling and skimming a couple blogs / so posts, I now just ask the AI. It pulls the exact info I need and sources it all. And being able to ask follow up questions is great.

It's great for learning new languages and frameworks

It's also very good at writing unit tests.

Also for recommending Frameworks/software for your use case.

I don't see it replacing developers, more reducing the number of developers needed. Like excel did for office workers.

You just described all of my use cases. I need to get more comfortable with copilot and codeium style services again, I enjoyed them 6 months ago to some extent. Unfortunately current employer has to be federally compliant with government security protocols and I'm not allowed to ship any code in or out of some dev machines. In lieu of that, I still run LLMs on another machine acting, like you mentioned, as sort of my stackoverflow replacement. I can describe anything or ask anything I want, and immediately get extremely specific custom code examples.

I really need to get codeium or copilot working again just to see if anything has changed in the models (I'm sure they have.)

It can't tell yet when the output is ridiculous or incorrect for non coding, but it will get there. Same for coding. It will continue to grow in complexity and ability.

It will get there, eventually. I don't think it will be writing complex code any time soon, but I can see it being aware of all the libraries and foss that a person cannot be across.

I would foresee learning to code as similar to learning to do accounting manually. Yes, you'll still need to understand it to be a coder, but for the average person that can't code, it will do a good enough job, like we use accounting software now for taxes or budgets that would have been professionally done before. For complex stuff, it will be human done, or human reviewed, or professional coders giving more technical instructions for ai. For simple coding, like you might write a python script now, for some trivial task, ai will do it.

It might not make him wrong, but he also happens to be wrong.

You can't compare AI art or literature to AI software, because the former are allowed to be vague or interpretive while the latter has to be precise and formally correct. AI can't even reliably do art yet, it frequently requires several attempts or considerable support to get something which looks right, but in software "close" frequently isn't useful at all. In fact, it can easily be close enough to look right at first glance while actually being catastopically wrong once you try to use it for real (see: every bug in any released piece of software ever)

Even when AI gets good enough to reliably produce what it's asked for first time & every time (which is a long way away for quite a while yet), a sufficiently precise description of what you want is exactly what programmers spend their lives writing. Code is a description of a program which another program (such as a compiler) can convert into instructions for the computer. If someone comes up with a very clever program which can fill in the gaps by using AI to interpret what it's been given, then what they've created is just a new kind of programming language for a new kind of compiler

I don't disagree with your point. I think that is where we are heading. How we interact with computers will change. We're already moving away from keyboard typing and clicks, to gestures and voice or image recognition.

We likely won't even call it coding. Hey Google, I've downloaded all the episodes for the current season of Pimp My PC, can you rename the files by my naming convention and drop them into jellyfin. The AI will know to write a python script to do so. I expect it to be invisible to the user.

So, yes, it is just a different instruction set. But that's all computers are. Data in, data out.

Well difference is you have to know coming to know did the AI produce what you actually wanted.

Anyone can read the letter and know did the AI hallucinate or actually produce what you wanted.

On code. It might produce code, that by first try does what you ask. However turns AI hallucinated a bug into the code for some edge or specialty case.

Hallucinating is not a minor hiccup or minor bug, it is fundamental feature of LLMs. Since it isn't actually smart. It is a stochastic requrgitator. It doesn't know what you asked or understand what it is actually doing. It is matching prompt patterns to output. With enough training patterns to match one statistically usually ends up about there. However this is not quaranteed. Thus the main weakness of the system. More good training data makes it more likely it more often produces good results. However for example for business critical stuff, you aren't interested did it get it about right the 99 other times. It 100% has to get it right, this one time. Since this code goes to a production business deployment.

I guess one can code comprehensive enough verified testing pattern including all the edge cases and with thay verify the result. However now you have just shifted the job. Instead of programmer programming the programming, you have programmer programming the very very comprehensive testing routines. Which can't be LLM done, since the whole point is the testing routines are there to check for the inherent unreliability of the LLM output.

It's a nice toy for someone wanting to make a quick and dirty test code (maybe) to do thing X. Then try to find out does this actually do what I asked or does it have unforeseen behavior. Since I don't know what the behavior of the code is designed to be. Since I didn't write the code. good for toying around and maybe for quick and dirty brainstorming. Not good enough for anything critical, that has to be guaranteed to work with promise of service contract and so on.

So what the future real big job will be is not prompt engineers, but quality assurance and testing engineers who have to be around to guard against hallucinating LLM/ similar AIs. Prompts can be gotten from anyone, what is harder is finding out did the prompt actually produced what it was supposed to produce.

Until somewhere things go wrong and the supplier tries the "but an AI wrote it" as a defense when the client sues them for not delivering what was agreed upon and gets struck down, leading to very expensive compensations that spook the entire industry.

Aor Canada already tried that and lost. They had to refund the customer as the chatbot gave incorrect information.

Turns out the chatbot gave the correct information. Air Canada just didn’t realize they had legally enabled the AI to set company policy. :)

I worry for the future generations that cant debug cos they dont know how to program and just use ai.

Don't worry, they'll have AI animated stick figures telling them what to do instead...

Having used Chat GPT to try to find solutions to software development challenges, I don't think programmers will be at that much risk from AI for at least a decade.

Generative AI is great at many things, including assistance with basic software development tasks (like spinning up blueprints for unit tests). And it can be helpful filling in code gaps when provided with a very specific prompt... sometimes. But it is not great at figuring out the nuances of even mildly complex business logic.

This.

I got a github copilot subscription at work and its useful for suggesting code in small parts, but i would never let it decide what design pattern to use to tackle the problem we are solving. Once i know the solution i can use ai, and verify its output to use in the code

I'm using it at work as well and Copilot has been pretty decent with writing out entire methods when I start with the jsdoc or code comments before writing the actual method. It's now becoming my habit to have it generate some near-working code or decent boilerplate.

If you haven't tried it yet, give this a shot!

I'm a junior dev that has been on the job for ~6 months. I found AI to be useful for learning when I had to make an application in Swift and had zero experience of the language. It presented me with some turd responses, but from this it gave me the idea of what to try and what to look into to find answers.

I find that sometimes AI can present a concept to me in a way I can understand, where blogs can fail. I'm not worried about AI right now, it's a tool to make our jobs easier!

Yeah it's great as a companion tool.

Yeah our latest group of juniors were able to get up to speed very quickly.

I think it will get good enough to do simple tickets on its own with oversight, but I would not trust it without it submitting it via a pr for review and iteration.

I agree, it would take at least a decade for fully autonomous programming, and frankly, by the time it can fully replace programmers it will be able to fully replace every office job, at which point were going to have to rethink everything.

This overglorified snake oil salesman is scared.

Anyone who understands how these models works can see plain as day we have reached peak LLM. Its enshitifying on itself and we are seeing its decline in real time with quality of generated content. Dont believe me? Go follow some senior engineers.

Any recommendations whom to follow? On Mastodon?

There is a reason they didn't offer specific examples. LLM can still scale by size, logical optimization, training optimization, and more importantly integration. The current implementation is reaching it's limits but pace of growth is also happening very quickly. AI reduces workload, but it is likely going to require designers and validators for a long time.

For sure evidence is mounting that model size benefit is not returning the quality expected. Its also had the larger net impact of enshitifying itself with negative feedback loops between training data, humans and back to training. This one being quantified as a large declining trend in quality. It can only get worse as privacy, IP laws and other regulations start coming into place. The growth this hype master is selling is pure fiction.

But he has a lot of product to sell.

And companies will gobble it all up.

On an unrelated note, I will never own a new graphics card.

Secondhand is better value, still new cost right now is nothing short of price fixing. You only need look at the size reduction in memory since A100 was released to know what’s happening to gpu’s.

We need serious competition, hopefully intel is able to but foreign competition would be best.

I doubt that any serious competitor will bring any change to this space. Why would it - everyone will scream 'shut up and take my money'.

I think you answered your own question: money

Fediverse is sadly not as popular as we would like sorry cant help here. That said i follow some researchers blogs and a quick search should land you with some good sources depending on your field of interest

Why do you think we've reached peak LLM? There are so many areas with room for improvement

You asked the question already answered. Pick your platform and you will find a lot of public research on the topic. Specifically for programming even more so

As a developer building on top of LLMs, my advice is to learn programming architecture. There's a shit ton of work that needs to be done to get this unpredictable non deterministic tech to work safely and accurately. This is like saying get out of tech right before the Internet boom. The hardest part of programming isn't writing low level functions, it's architecting complex systems while keeping them robust, maintainable, and expandable. By the time an AI can do that, all office jobs are obsolete. AIs will be able to replace CEOs before they can replace system architects. Programmers won't go away, they'll just have less busywork to do and instead need to work at a higher level, but the complexity of those higher level requirements are about to explode and we will need LLMs to do the simpler tasks with our oversight to make sure it gets integrated correctly.

I also recommend still learning the fundamentals, just maybe not as deeply as you needed to. Knowing how things work under the hood still helps immensely with debugging and creating better more efficient architectures even at a high level.

I will say, I do know developers that specialized in algorithms who are feeling pretty lost right now, but they're perfectly capable of adapting their skills to the new paradigm, their issue is more of a personal issue of deciding what they want to do since they were passionate about algorithms.

the day programming is fully automated, so will other jobs.

maybe it'd make more sense if he suggested to be a blue collar worker instead.

Human can probably still look forward to back breaking careers of manual labor that consist of complex varied movements!

Humans wear out rather quickly compared to robots

Humans can be cheaper than robots if properly exploited!

Finally someone gets it! Gets where we are heading that is.

Im not so sure about that, depends on the job and the worker rights of the place where you're setting up shop

At best, in the near term (5-10 years), they'll automate the ability to generate moderate complexity classes and it'll be up to a human developer to piece them together into a workable application, likely having to tweak things to get it working (this is already possible now with varying degrees of success/utter failure, but it's steadily improving all the time). Additionally, developers do far more than just purely code. Ask any mature dev team and those who have no other competent skills outside of coding aren't considered good workers/teammates.

Now, in 10+ years, if progress continues as it has without a break in pace... Who knows? But I agree with you, by the time that happens with high complexity/high reliability for software development, numerous other job fields will have already become automated. This is why legislation needs to be made to plan for this inevitability. Whether that's thru UBI or some offshoot of it or even banning automation from replacing major job fields, it needs to be seriously discussed and acted upon before it's too little too late.

Well. That's stupid.

Large language models are amazingly useful coding tools. They help developers write code more quickly.

They are nowhere near being able to actually replace developers. They can't know when their code doesn't make sense (which is frequently). They can't know where to integrate new code into an existing application. They can't debug themselves.

Try to replace developers with an MBA using a large language model AI, and once the MBA fails, you'll be hiring developers again - if your business still exists.

Every few years, something comes along that makes bean counters who are desperate to cut costs, and scammers who are desperate for a few bucks, declare that programming is over. Code will self-write! No-code editors will replace developers! LLMs can do it all!

No. No, they can't. They're just another tool in the developer toolbox.

I've been a developer for over 20 years and when I see Autogen generate code, decide to execute that code and then fix errors by making a decision to install dependencies, I can tell you I'm concerned. LLMs are a tool, but a tool that might evolve to replace us. I expect a lot of software roles in ten years to look more like an MBA that has the ability to orchestrate AI agents to complete a task. Coding skills will still matter, but not as much as soft skills will.

I really don't see it.

Think about a modern application. Think about the file structure, how the individual sources interrelate, how non-code assets are stored, how applications are deployed, and all the other bits and pieces that go into an application. An AI can't know any of that without being trained - by a human - on the specifics of that application's needs.

I use Copilot for my job. It's very nice, and makes my job easier. And if my boss fired me and the rest of the team and tried to do it himself, the application would be down in a day, then irrevocably destroyed in a week. Then he'd be fired, we'd be rehired, and we - unlike my now-former boss - would know things like how to revert the changes he made when he broke everything while trying to make Copilot create a whole new feature for the application.

AI code generation is pretty cool, but without the capacity to know what code actually should be generated, it's useless.

It's just going to create a summary story about the code base and reference that story as it implements features, not that different that a human. It's not necessarily something it can do now but it will come. Developers are not special, and I was never talking about Copilot.

I don't think most people grok just how hard implementing that kind of joined-up thinking and metacognition is.

You're right, developers aren't special, except in those ways all humans are, but we're a very long way indeed from being able to simulate them in AI - especially in large language models. Humans automatically engage in joined-up thinking, second-order logic, and so on, without having to consciously try. Those are all things a large language model literally can't do.

It doesn't know anything. It can't conceptualize a "summary story," or understand parts that it might get wrong in such a story. It's glorified autocomplete.

And that can be extraordinarily useful, but only if we're honest with ourselves about what it is and is not capable of.

Companies that decide to replace their developers with one guy using ChatGPT or Gemini or something will fail, and that's going to be true for the foreseeable future.

Try for a second to think beyond what they're able to do now and think about the future. Also, educate yourself on Autogen and CrewAI, you actually haven't addressed anything I said because you're too busy pontificating.

I am. In the future, they will need to be able to perform tasks using joined-up thinking, second-order logic, and metacognition if they're going to replace people like me with AI. And that is a very hard goal to achieve. Maybe not P = NP hard, but by no means trivial.

I have. My company looked at Autogen. We concluded it wasn't worth it. The solution to AI agents not being able to actually understand what they're doing isn't to amplify the problem by creating teams of them.

Every few years, something new comes along driven by incredible hype, and people declare programming to be dead. They insist a robot will be able to do my job. I have yet to see a technology that will plausibly do that in ten years, let alone now. And all the hype is built on a foundation of ignorance over how complicated a modern, enterprise-ready application is, and how necessary being able to think about its many moving parts is.

You know who doesn't suffer from that ignorance? Microsoft, the creators of Autogen. And they're currently hiring developers, not laying them off and replacing them with Autogen.

Well, I sometimes see a few tools at my job, which are supposed to be kinda usable by people like that. In reality they can't 90% of time.

That'd be because many people think that engineers deal in intermediate technical details, and the general idea is clear for this MBA. In fact it's not.

Ok.

You remember when everyone was predicting that we are a couple of years away from fully self-driving cars. I think we are now a full decade after those couple of years and I don't see any fully self driving car on the road taking over human drivers.

We are now at the honeymoon of the AI and I can only assume that there would be a huge downward correction of some AI stocks who are overvalued and overhyped, like NVIDIA. They are like crypto stock, now on the moon tomorrow, back to Earth.

Two decades. DARPA Grand Challenge was in 2004.

Yeah, everybody always forgets the hype cycle and the peak of inflated expectations.

Waymo exists and is now moving passengers around in three major cities. It's not taking over yet, but it's here and growing.The timeframe didn't meet the hype but the technology is there.

Yes, the technology is there but it is not Level 5, it is 3.5-4 at best.

The point with a full self-driving car is that complexity increases exponentially once you reach 98-99% and the last 1-2% are extremely difficult to crack, because there are so many corner cases and cases you can't really predict and you need to make a car that drives safer than humans if you really want to commercialize this service.

Same with generative AI, the leap at first was huge, but comparing GPT 3.5 to 4 or even 3 to 4 wasn't so great. And I can only assume that from now on achieving progress will get exponentially harder and it will require usage of different yet unknown algorithms and models and advances will be a lot more modest.

And I don't know for you but ChatGPT isn't 100% correct especially when asking more niche questions or sending more complex queries and often it hallucinates and sometimes those hallucinations sound extremely plausible.

Quantuum computing is going to make all encryption useless!! Muwahahahahaaa!

. . . Any day now . . Maybe- ah! No, no thought this might be the day, but no, not yet.

Any day now.

If you're able to break everybody's encryption, why would you tell anybody?

If you were able to generate near life-like images and simulacrams of human speech why would you tell anyone?

Money. The answer is money.

Quantum computing wouldn't be developed just to break encryption, the exponential increase in compute power would fuel a technological revolution. The encryption breaking would be the byproduct.

Lmao do the opposite of whatever this guy says, he only wants his 2 trillion dollar stockmarket bubble not to burst

This seems as wise as Bill Gates claiming 4MB of ram is all you'll ever need back on 98 🙄

It's just as crazy as saying "We don't need math, because every problem can be described using human language".

In other words, that might be true as long as your problem is not complex enough to be able to be understood using human language.

You want to solve a real problem? It's way more complex with so many moving parts you can't just take LLM to solve it, because that takes an actual understanding of a problem.

Ha

If you ever write code for a living first thing you notice is that people can't explain what they need by using natural language ( which is what English, Mandarin etc is), even if they don't need to get into details.

Also, natural language can be vague and confusing. Look at legalese and law statutes. "When it comes to the law, NOTHING is understood!" ‐- Dragline

Maybe more apt for me would be, “We don’t need to teach math, because we have calculators.” Like…yeah, maybe a lot of people won’t need the vast amount of domain knowledge that exists in programming, but all this stuff originates from human knowledge. If it breaks, what do you do then?

I think someone else in the thread said good programming is about the architecture (maintainable, scalable, robust, secure). Many LLMs are legit black boxes, and it takes humans to understand what’s coming out, why, is it valid.

Even if we have a fancy calculator doing things, there still needs to be people who do math and can check. I’ve worked more with analytics than LLMs, and more times than I can count, the data was bad. You have to validate before everything else, otherwise garbage in, garbage out.

It’s sounds like a poignant quote, but it also feels superficial. Like, something a smart person would say to a crowd to make them say, “Ahh!” but also doesn’t hold water long.

And because they are such black boxes, there's the sector of Explainable AI which attempts to provide transparency.

However, in order to understand data from explainable AI, you still need domain experts that have experience in interpreting what that data means and how to make changes.

It's almost as if any reasonably complex string of operations requires study. And that's what tech marketing forgets. As you said, it all has to come from somewhere.

I don't see how it would be possible to completely replace programmers. The reason we have programming languages instead of using natural language is that the latter has ambiguities. If you start having to describe your software's behaviour in natural language, then one of three things can happen:

And if you don't know how to code, how do you even know if it gave you the output you want until it fails in production?

But that’s not what the article is getting at.

Here’s an honest take. Let me preface this with some credential: I’m an AI Engineer with many years in field. I’m directly working on right now multiple projects that augment and automate code generation, documentation, completion and even system design/understanding. We’re not there yet. But the pace of progress in how fast we are improving our code-AI is astounding. Exponential growth in capability and accuracy and utility.

As an anecdotal example; a few years ago I decided I would try to learn Rust (programming language), because it seemed interesting and we had a practical use case for a performant, memory-efficient compiled language. It didn’t really work out for me, tbh. I just didn’t have the time to get very fluent with it enough to be effective.

Now I’m on a project which also uses Rust. But with ChatGPT and some other models I’ve deployed (Mixtral is really good!) I was basically writing correct, effective Rust code within a week—accepted and merged to main.

I’m actively using AI code models to write code to train, fine-tune, and deploy AI code models. See where this is going? That’s exponential growth.

I honestly don’t know if I’d recommend to my young kids programming as a career now even if it has been very lucrative for me and will take me to my retirement just fine. It excites me and scares me at the same time.

There is more to a program then writing logic. Good engineers are people who understand how to interpret problems and translate the inherent lack of logic in natural language into something that machines are able to understand (or vice versa).

The models out there right now can truly accelerate the speed of that translation - but translation will still be needed.

An anecdote for an anecdote. Part of my job is maintaining a set of EKS clusters where downtime is... undesirable (five nines...). I actively use chatgpt and copilot when adjusting the code that describes the clusters - however these tools are not able to understand and explain impacts of things like upgrading the control plane. For that you need a human who can interpret the needs/hopes/desires/etc of the stakeholders.

Yeah I get it 100%. But that’s what I’m saying. I’m already working on and with models that have entire codebase level fine-tuning and understanding. The company I work at is not the first pioneer in this space. Problem understanding and interpretation— all of what you said is true— there are causal models being developed (I am aware of one team in my company doing exactly that) to address that side of software engineering.

So. I don’t think we are really disagreeing here. Yes, clearly AI models aren’t eliminating humans from software today; but I also really don’t think that day is all that far away. And there will always be need for humans to build systems that serve humans; but the way we do it is going to change so fundamentally that “learn C, learn Rust, learn Python” will all be obsolete sentiments of a bygone era.

Let's be clear - current AI models are being used by poor leadership to remove bad developers (good ones don't tend to stick around). This however does place some pressure on the greater tech job market (but I'd argue no different then any other downturn we have all lived through).

That said, until the issues with being confidently incorrect are resolved (and I bet people a lot smarter then me are tackling the problem) it's nothing better then a suped up IDE. Now if you have a public resources you can point me to that can look at a meta repo full of dozens of tools and help me convert the python scripts that are wrappers of wrappers( and so on) into something sane I'm all ears.

I highly doubt we will ever get to the point where you don't need to understand how an algorithm works - and for that you need to understand core concepts like recursion and loops. As humans brains are designed for pattern recognition - that means writing a program to solve a sodoku puzzle.

Jensen fucking Huang is a piece of shit and choke full of it too

Actually, AI can replace this dick at a fraction of the cost instead of replacing developers. Bring out the guillotine mfs

Your vulgarity and call to violence are quite convincing, sir. Mayhaps you moonlight as a bard?

Lets guillotine the Bard guy too.

You know what, let's do a Stalin-style purge of Silion Valley suits. New people with perhaps, less evi lideas can take their place.

I think this is bullshit regarding LLMs, but making and using generative tools more and more high-level and understandable for users is a good thing.

Like various visual programming means, where you sketch something working via connected blocks (like PureData for sounds), or in Matlab I think one can use such constructors to generate code for specific controllers involved in the scheme, or like LabView.

Or like HyperCard.

Not that anybody should stop learning anything. There's a niche for every way to do things.

I just like that class of programs.

Doubt

Why would he lie? Other than to pump the companies shares

I can kind of see his point, but the things he is suggesting instead (biology, chemistry, finance) don't make sense for several reasons.

Besides the obvious "why couldn't AI just replace those people too" (even though it may take an extra few years), there is also a question of how many people can actually have a deep enough expertise to make meaningful contributions there - if we're talking about a massive increase of the amount of people going into those fields.

I mean why have a CS degree when an AI subscription costs $30/month?

/s

There’s good money to be made in selling leather jackets.

I think the Jensen quote loosley implies we don't need to learn a programming language but the logic was flimsy. Same goes for the author as they backtrack a few times. Not a great article in my opinion.

Jensen's just trying to ride the AI bubble as far as it'll go, next he'll tell you to forget about driving or studying

It's not really about the coding, it's about the process of solving the problem. And ai is very far away from being able to do that. The language you learn to code in is probably not the one you will use much of you life. It will just get replaced by which ai you will use to code.

Yep. The best guy on my team isn't the best coder. He's the best at visualizing the complete solution and seeing pinch points in his head.

So TIL 'prompt engineer' is now a thing. We're doomed, aren't we?

Yay! Another job that's impossible to fail at for those who are already well-off.

Don't tell me what to do. Going to spend more time learning to code from now on, thanks.

Nvidia is such a stupid fucking company. It's just slapping different designs onto TSMC chips. All our "chip companies" are like this. In the long run they are all going to get smoked. I won't tell you by whom. You shouldn't need a reminder.

Designing a chip is something completely different from manufacturing them. Your statement is as true as saying TSMC is such a stupid company, all they are doing is using ASML machines.

And please tell me, I have no clue at all who you're talking about.

The Chinese? I think their claim to fame is making processes stolen from TSMC work using pre-EUV lithography. Expensive AF because slow but they're making some incredibly small structures considering the tech they have available. Russians are definitely out of the picture they're in the like 90s when it comes to semiconductors and can't even do that at scale.

And honestly I have no idea where OP is even from, "All our chip companies". Certainly not the US not at all all US chip companies are fabless: IBM, Ti and Intel are IDMs. In Germany IDMs predominate, Bosch and Infineon though there's of course also some GlobalFoundries here, that's pure play, so will be the TSMC-Bosch-NXP-Infineon joint venture ESMC. Korea and Japan are also full of IDMs.

Maybe Britain? ARM is fabless, OTOH ARM is hardly British any more.

Amazon is fabless for their chip design unit, there all little mini design units for shit like datacenters.

It's hilarious you're saying that because Intel labelled itself an investor in USA foundry projects you think they are exempt from this. Okay man, go work at the plants in Ohio and Arizona. Oh wait, they don't fucking exist bruh

Intel, Ti and IBM all made chips before pure-play and fabless were even a thing, and are still doing so. Intel has 16 fabs in the US, Ti 8, IBM... oh, they sold their shit, I thought they still had some specialised stuff for their mainframes. Well, whatever.

Of all companies, the likes of Amazon and Google not fabbing their own chips should hardly be surprising. They're data centre operators, they don't even sell chips, if they set up fabs they'd have to start doing that, or compete with TSMC to not have idle capacity standing around costing tons of money. A fab is not like a canteen which you can expect to actually be in use all the time so there's going to be no need to compete in the restaurant business to make it work.

And that's only really looking at logic chips, e.g. Micron also has fabs at home in the US.

None of those companies even make a blip on global chip production though. Are they for research or something? Why should I give a shit about a tiny technically existing fraction of production that will never expand?

Go look at where there has been actual foundry production for decades. None of the companies you mentioned even exist in foundry. Who cares if they have A facility or two? That's just part of figuring out what they're going to order from TSMC.

Your goalposts, they are moving.

The US has the know-how to produce modern chips at scale, or at least not too far behind in strategic terms. You could bring all production home if that's what you wanted, it'd cost a lot of money but it's simply a policy issue. And Amazon wouldn't suddenly start to run fabs they'd hire capacity from Intel or whomever.

...you'd still be reliant on European EUV machines, though. Everyone is, if you intend to produce very modern chips at scale. But if your strategic interest is making sure that the DMV has workstations and the military guidance computers that's not necessary, pre-EUV processes are perfectly adequate.

You are the one moving the goalposts with your boasts about how these companies make up LITERALLY an INFINITESIMAL portion of global chip production. Even if you cut out Samsung and TSMC they wouldn't be global players.

No, we can't just bring all production home lol. We've been saying we will for years. Where is the foundry in Ohio dude? Where is the Arizona foundry that's supposed to bolster TSMC production?

Lol yeah sure go ask ASML how their business is doing rn in light of the US chip war sanctions. European manufacturing is in as dire a state as the US now due to financialization and now the skyrocketing energy costs.

People said this about our military production too. "Oh, Russia messed up now, we're going to get serious and amp up our military production." 🦗🦗🦗🦗🦗🗓️🗓️🗓️🗓️ (time loudly passing and nothing happening)

How many times is it going to take for people to learn it gets transmuted directly into stock buybacks lmao? We don't have the electrical grid to build up our manufacturing base in the modern world yet. The US is a giant casino for the elite of our empire full of slums.

You won't hear me disagree with that. But to say, and I quote you directly:

While Intel might very well take the tech crown (gate all around with backside power) from TSMC this year is wildly incorrect.

"Skyrocketing", yeah. Gas looks similar.

And no European manufacturing is not in nearly as dire a state as in the US. For that to be the case we'd have to have as shoddy infrastructure and decades-long underinvestment and offshoring as the US has. The US has in fact a more advanced chip industry than the Europe: We're good at the basic science, we're good at bulk production of specialised stuff, one thing that we're not great at is top-tier CPUs and GPUs, chips that are their own products, what we produce is the usual "the thing that goes into a thing that goes into a thing you buy". Like, random example, pretty much every smartphone in the world uses a Bosch gyroscope and they produce those things in-house.

But that doesn't mean that the US is fucked, in the least: If need be it would be able to spring back to life quite quickly, Thing is, needs do not be, so if your worry is elite casinos maybe don't focus so much on chips and incorrect statements about US capacity there but said elite casino directly.

Yeah the casino bit is the most important part for sure. In light of how financialized everything is, huge costs, massively inflated financial asset & real estate prices etc, labor costs, it's more likely for Detroit to spring back into being an industrial hub.

We focus all of our political energy on monopolizing the top of the value chain TSMC is a part of and we can't replace it with our own production bc it's so crucial for cutting costs down. They can't even expand the production for lower end chips (ROI isn't there) now so Russia and China are gonna scoop up orders from expansion in the many industries that use them (low end chips were like 20% of TSMC's revenue recently, iirc). Which will help them develop their higher end foundries which they definitely can make I mean Rosatom produces super high quality Xray mirrors and the Chinese govt won't balk at industrial investment or high tech training programs.

ASML's whole position in this convoluted supply chain means they only make those shipping container sized thingies with the rube goldberg machine of mirrors hooked up to a gallium plasma light thingy, and that ultimately limits the minimum nanometer size of the circuits made in the fabrication units they ship out. If I'm getting that right 🤪. This is really futuristic stuff I'm talking about now but the next-next gen fabrication units beyond Russia and China catching up could even be hooked up to a particle accelerator. That's pretty hard to export in the same way.

I just don't see how we can politically or financially solve these problems in the US or EU lol. We're kind of caught by the balls as workers no?

You don't need to fab chips to have a job. Let the Taiwanese have their speciality you have yours what's wrong with that.

Also TSMC's company culture is excessively Confucian you probably wouldn't want to work there anyway. It's not just the company and culture that makes the company but also things like Taiwanese universities churning out masses of highly-skilled electrical engineers, basically the only reason TSMC even agreed to that European joint-venture is because Dresden's universities have been focussed on that exact field for decades, even before reunification. For a similar reason you couldn't just take Zeiss and move it out of Jena: They need the local university to funnel students into their workforce. There's no better place to study optics in the world than Jena.

Which actually brings me to another point: All these are labour aristocracy jobs, not just trained but highly educated, comparable to a doctor at a hospital. They have a lobby, they have a good bargaining position. Worrying about them won't do anything for the burger flipper at your local fast-food joint who tends to have neither. It also won't really do much for the injection moulding machine operator producing tea sieves (sorry I was just admiring one it has stainless steel mesh embedded in it, not easy to produce, made in Germany, not cheap but oh gods is that thing worth the extra three bucks (it was five)).

sure, they're labor aristocracy jobs bc they're at the tip top of the global supply chain, but most people do not partake in that at all, or management etc, or legal/medical/whatever other high end shit, and 50% of the US is in crappy service work like mcdonalds literally.

no matter what industry you work in you can only be pessimistic here lmao unless you're like in finance or useless c suite shit

i'm not crying for the TSMC foundry or trying to work there. i hope the NATO+ intelligence services edge from high end chip production being under our control is unseated, it would be good for all of us

what's going on with TSMC is indicative of wider issues with all kinds of US industries I'm in solar and frankly I plan to gtfo in the long term to a more interesting area of development. I don't expect it to make my life easier per se but there are a lot of reasons.

Oh also insurance prices and shit like that here are a nightmare. Legal is too obviously. That's why Nike could never just move all their production here even thought it would be trivial to teach people to make shoes. The convoluted global supply chain is the whole point

Except what NVIDIA is doing can be done by numerous other chip design firms, TSMC cannot be replaced.

Funny enough we now have prompt engineering which is specifically for talking to ai.

I'm so sick of the hyper push for specialization, it may be efficient but it's soul crushing. IDK maybe it's ADHD but I'd rather not do any one thing for more than 2 weeks.

What hyper push? I can't think of a time in history ever when somebody with two weeks of experience was in demand

Most people got jobs with no experience, or even education less than 40 years ago as long as they showed up and acted confident. Nowadays entry level internships want MScs and years of work xp with something that was invented yesterday

You mistake 'businesses were more willing to take a chance' for 'businesses didn't want experience'. Unless it's a bullshit retail job, anybody with no experience is a net burden their first month.

I don't think he's seen the absolute fucking drivel that most developers have been given as software specs before now.

Most people don't even know what they want, let alone be able to describe it. I've often been given a mountain of stuff, only to go back and forth with the customer to figure out what problem they're actually trying to solve, and then do it in like 3 lines of code in a way that doesn't break everything else, or tie a maintenance albatross around my neck for the next ten years.

Yesterday, I had to deal with a client that literally contradicted himself 3 times in 20 minutes, about whether a specific Date field should be obligatory or not. My boss and a colleague who were nearby started laughing once the client went away, partly because I was visibly annoyed at the indecision.

"I'm coming fo dat ass, Jensen"

Lisa Su, probably.

Good thing I majored in computer engineering instead of computer science. I have a backdoor through electrical engineering.

"Time to pull the ladder up!"

That's how this statement and the state of the industry feels. The ai tools are empowering senior engineers to be as productive as a small team, so even my company laid off all the junior engineers.

So who's coming up behind the senior engineers? Is the ai they use going to take the reigns when they retire? Nope, the companies will be fucked.

"That's a future CEO's problem, not mine!" - Current CEO